Upgrading PostgreSQL

CLAIMS Direct now supports recent PostgreSQL versions (i.e. 12, 13, 14). The Alexandria schema (alexandria-schema-tools) and IFI CLAIMS Direct yum repository have been updated. They provide tools for quality control (cd-count.sh), bulk extraction (cd-extract.sh) and bulk loading (cd-load.sh).

Please note:

- Updates should be stopped (or paused) before proceeding. See apgupd for instructions.

- PostgreSQL must be configured to allow inbound and outbound communication via the pg_hba.conf, security group, and/or firewall.

Extraction

In order to migrate, the entire contents of the alexandria database must be extracted to disk.

Prerequisites

- A minimum of 2.0TB free space on the extract destination drive. You don't need a full database footprint as the extract files are compressed.

- Solid State Drives are required for better performance.

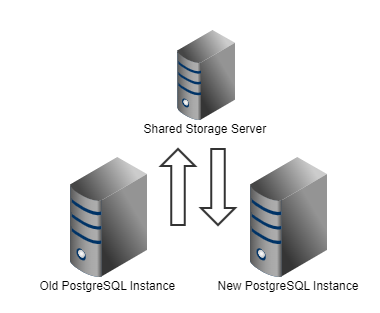

- Network connectivity between the two PostgreSQL databases is required. It is also possible to use a separate server either as an intermediary or to share the extract destination drive, e.g., via NFS between the current and new instance.

Process

Stop apgupd before proceeding (See apgupd for instructions.)

Extract the data from all applicable schema to the storage location.

The following script must be run on a drive with at least 2.0TB free.

First, the script:

cd-extract -h Usage: cd-extract.sh OPTIONS Option Description Default -------------------------------------------------------- -H|--host specify host localhost -P|--port specify port 5432 -d|--database specify database alexandria -u|--user specify database username alexandria -h|--help print this usage and exit Examples: cd-extract -h 10.10.10.2 -p 5432 -d alexandria -u alexandria cd-extract -h localhost -d alexandria -u alexandria

By default, this script uses the alexandria database located on localhost and outputs the compressed table data to ./data. If storage is tight on the CLAIMS Direct instance, you can also use an intermediary server to extract and load, or use a storage server to share disk to both the old and new CLAIMS Direct instances via NFS, e.g.,

For the sake of simplicity, we'll use the existing CLAIMS Direct instance (with the older version of PostgreSQL) for extraction and loading. If using a separate server, you will need to install PostgreSQL (the default version is sufficient: yum -y install postgresql), then replace localhost with the IP-of-CD-PostgreSQL-instance if you are using the older instance.

# Create a location for the extract mkdir EXTRACT && cd EXTRACT cd-extract.sh -H localhost -d alexandria -u alexandria # Please note, this process can take up to 3 days to complete, # Therefore, it is recommended to detach process using nohup nohup cd-extract.sh -H localhost -d alexandria -u alexandria & # Progress will be output to nohup.out (standard out for terminal invocation), e.g., Checking ./data ... not present, creating ... ok Extracting data ........ done

Loading

Prerequisites

- A new CLAIMS Direct instance. Please see the PostgreSQL Installation Instructions for step-by-step instructions.

- Access to the extract directory which contains the

datadirectory of the files created above. This could be via the older local CLAIMS Direct instance, an intermediate server, or a shared drive. - Installation of supplementary streams, e.g., litigation, entities etc., see installation instructions.

Process

Loading the data is accomplished in much the same way you loaded the original CLAIMS Direct instance: cd-load.sh or load.sh for earlier installs.

# Move into the directory created above cd EXTRACT # Load the data cd-load.sh -H IP -d alexandria -u alexandria # As above, this is a multi-day process and # It is therefore recommended to detach the process nohup cd-load.sh -H [IP-of-CD-PostgreSQL-instance] -d alexandria -u alexandria &